The object of this pilot project is to automatically download and clean web content from a website. This particular website is of interest for my research as it showcases features of a written register I want to know more about. The idea is to gather a relevant number of texts published in this website and put together a corpus that I can exploit.

For this pilot project, I decided to use a collab notebook. You can find more information on this environment here. In short, here you can write and execute your Python code online using Drive to store, write and read your data.

First, I had a look at the Seedbank project to search for notebooks with similar projects but I was unlucky. Then I checked out some of the Python libraries out there and it seemed that Beautiful Soup is very popular, so I gave it a go. As I plan to manipulate, clean and visualize some of the data I collect, I´ll need some libraries that can do the job. So I´m using Pandas to manipulate the data and, among others, Matplotlib.

The Beautiful Soup library I´ll import is bs4 =Beautiful Soup, version 4. For more information on this library check out this. Beautiful Soup is licensed by MIT.

What Beautiful Soup can do

A few examples from the documentation of what Beautiful Soup can do :

Extracting all the URLs found within a page’s <a> tags:

for link in soup.find_all('a'):

print(link.get('href'))

# http://example.com/elsie

# http://example.com/lacie

# http://example.com/tillie

Extracting all the text from a page:

print(soup.get_text()) # The Dormouse's story # # The Dormouse's story # # Once upon a time there were three little sisters; and their names were # Elsie, # Lacie and # Tillie; # and they lived at the bottom of a well. # # ...

OK. Let´s do this

I´m following the directions in datacamp.com so you can do the same if you wish to do so. The next 4 lines of code are essential.

from urllib.request import urlopen

from bs4 import BeautifulSoup

url = “https://www.who.int/emergencies/diseases/novel-coronavirus-2019/advice-for-public/myth-busters”

html = urlopen(url)

We have asked our notebook to go to this website and read it. Now we create an object from the html code. ‘lxml’ is the html parser used by BS4 and ‘html’ is, so to speak, our website.

soup = BeautifulSoup(html, 'lxml')

type(soup)bs4.BeautifulSoupOnce we have created the object we can extract information.

title = soup.title<

print(title)will return the title of the page:

<title> Myth busters </title>

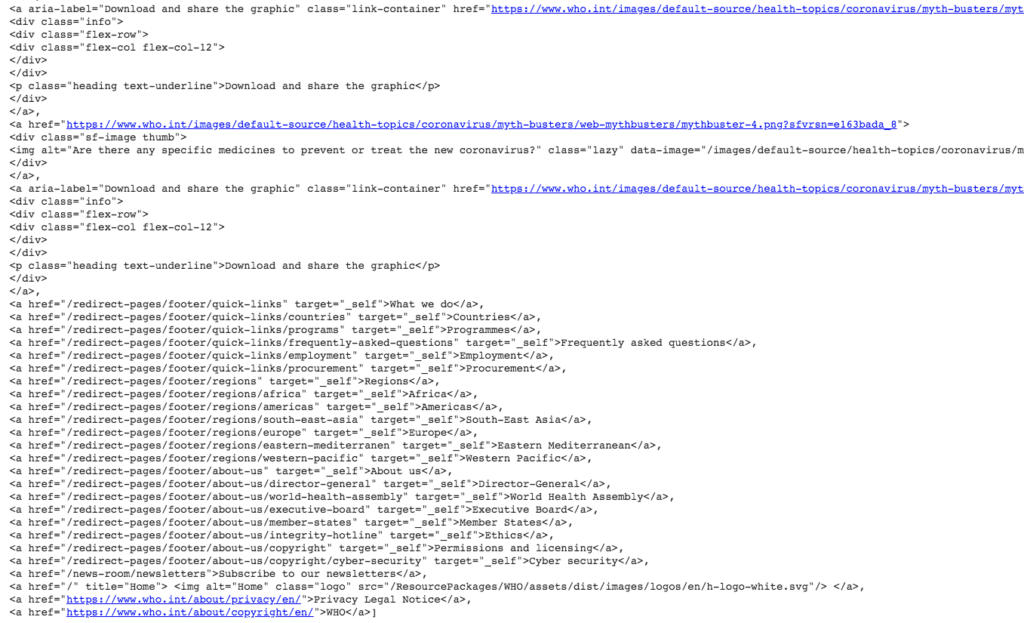

The find_all() method of soup extracts html tags within a webpage. For example,

soup.find_all('a')will return all the <a> tags including their atributes

I´m using https://realpython.com/beautiful-soup-web-scraper-python/ as a guide